Independent AI advisory for Australian boards, leadership teams, and regulated organisations. No vendor ties. Every recommendation tested on our own infrastructure.

Executive AI Risk & Strategy Briefings

The result: leaders trust AI-generated outputs without verification, granting algorithmic recommendations the weight of human expertise. Decisions get made on the basis of demos, not evidence.

The Problem: Boards, CISOs, and Agency Heads are trapped between pressure for adoption and no clear "mental model" for AI risk. Vendors are pitching solutions. Staff are already using AI on corporate data. Most organisations lack the internal depth to separate what works from what's hype.

Our Approach: We conduct a structured assessment of your organisation from the inside out. This is not a half-day checklist.

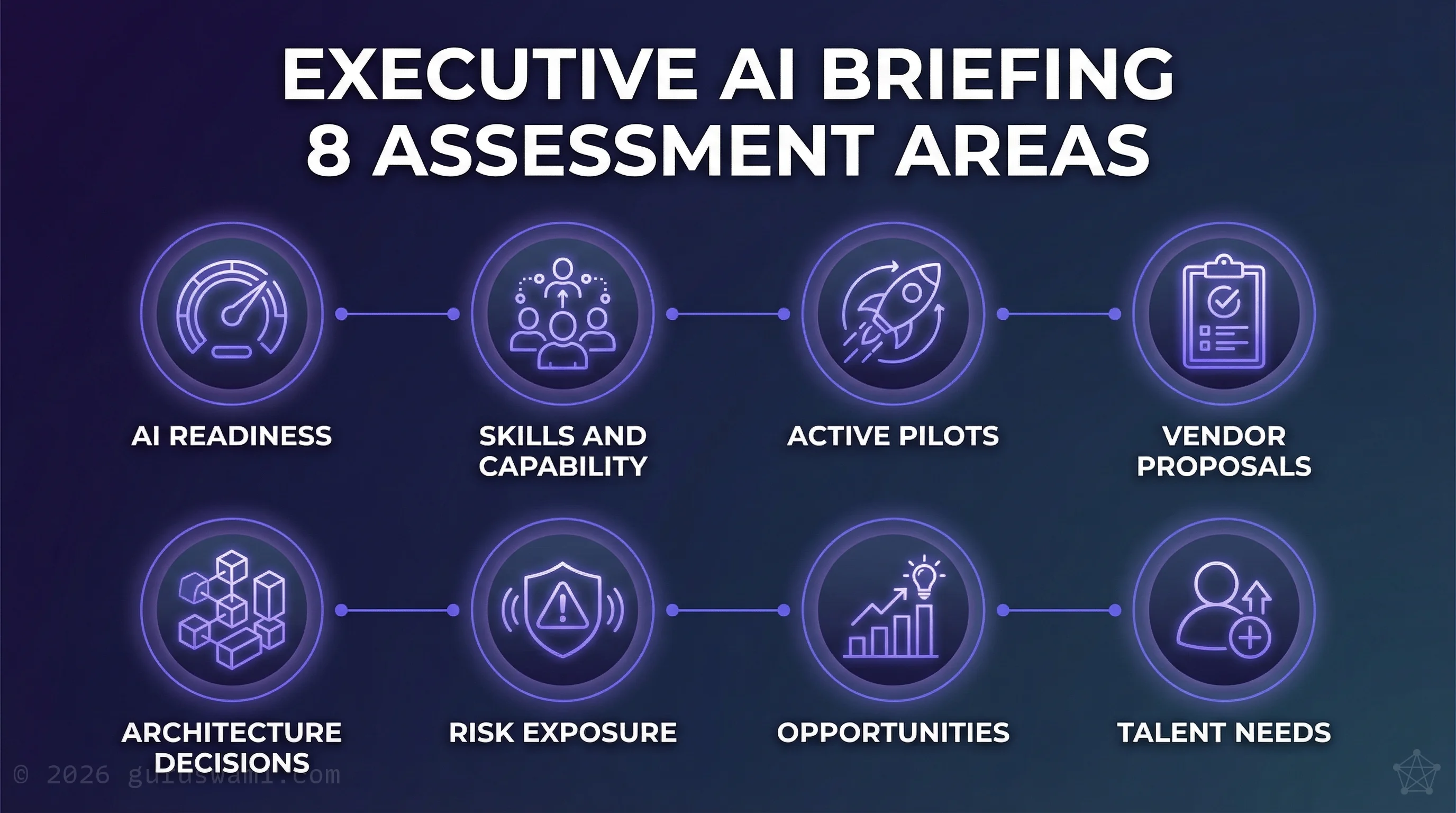

We assess AI readiness and maturity across your business units. We qualify in-house skills and identify gaps. We review active pilots, vendor proposals, and architecture decisions. We surface risks your team may not be aware of, and opportunities they haven't considered.

The depth of the engagement depends on the complexity of your organisation. Some take days, some take longer. Every one is built from scratch for your specific situation. At the conclusion, we deliver:

- Board Presentation or Briefing Note: A concise document that dispels misunderstandings, maps your real position, and gives your leadership team the knowledge to make AI decisions with confidence.

- Talent & Skills Assessment: We assess your existing teams for AI capability gaps and professional development priorities, and screen external candidates on your behalf — resume review, gatekeeper interviews, and shortlist recommendations. Your hiring managers only spend time with people who are genuinely worth it.

- Pilot & Vendor Recommendations: Clear guidance on which initiatives to advance, pause, or kill.

- Opportunity Assessment: Emerging AI capabilities relevant to your sector, and specific opportunities your organisation's data, domain knowledge, or market position make feasible.

Board & C-Suite Advisory (Retainers)

The Australian regulatory landscape has shifted from "voluntary guidance" to "ongoing obligations." APRA CPS 230 is effective since 1 July 2025, followed by the Digital Transformation Agency's mandatory AI policy milestones throughout 2026. Governance is no longer a one-off project. It is a permanent obligation.

The Problem: Mid-market organisations and government agencies often lack the budget for a full-time Chief AI Officer (CAIO), yet face the same scrutiny from ASIC, APRA, and the OAIC. This creates blind spots in data leakage and supply chain security that generalist IT staff are not equipped to close.

Our Approach: Fractional "AI Security & Strategy Officer" capability via a fixed monthly retainer. We join your risk committees, challenge vendor proposals, separate marketing claims from technical reality, and translate it all into language your Board can act on.

We check whether "Australian-hosted" actually meets regulatory requirements.

- Regulatory Change Tracking: Written briefings when APRA, ASIC, DTA, or OAIC requirements change, mapped to your specific use cases. You know what changed, what it means for you, and what to do about it.

- Quarterly Risk Register Updates: AI-specific risk profiles maintained in a format that integrates with your existing risk framework.

- Ad-hoc Counsel: Priority access when a vendor proposal lands, an incident occurs, or a board question needs a rapid technical answer.

AI Project & Architecture Reviews

Most AI pilots never reach production. For regulated firms, independent validation of security and architecture claims before deployment is a mandatory risk control.

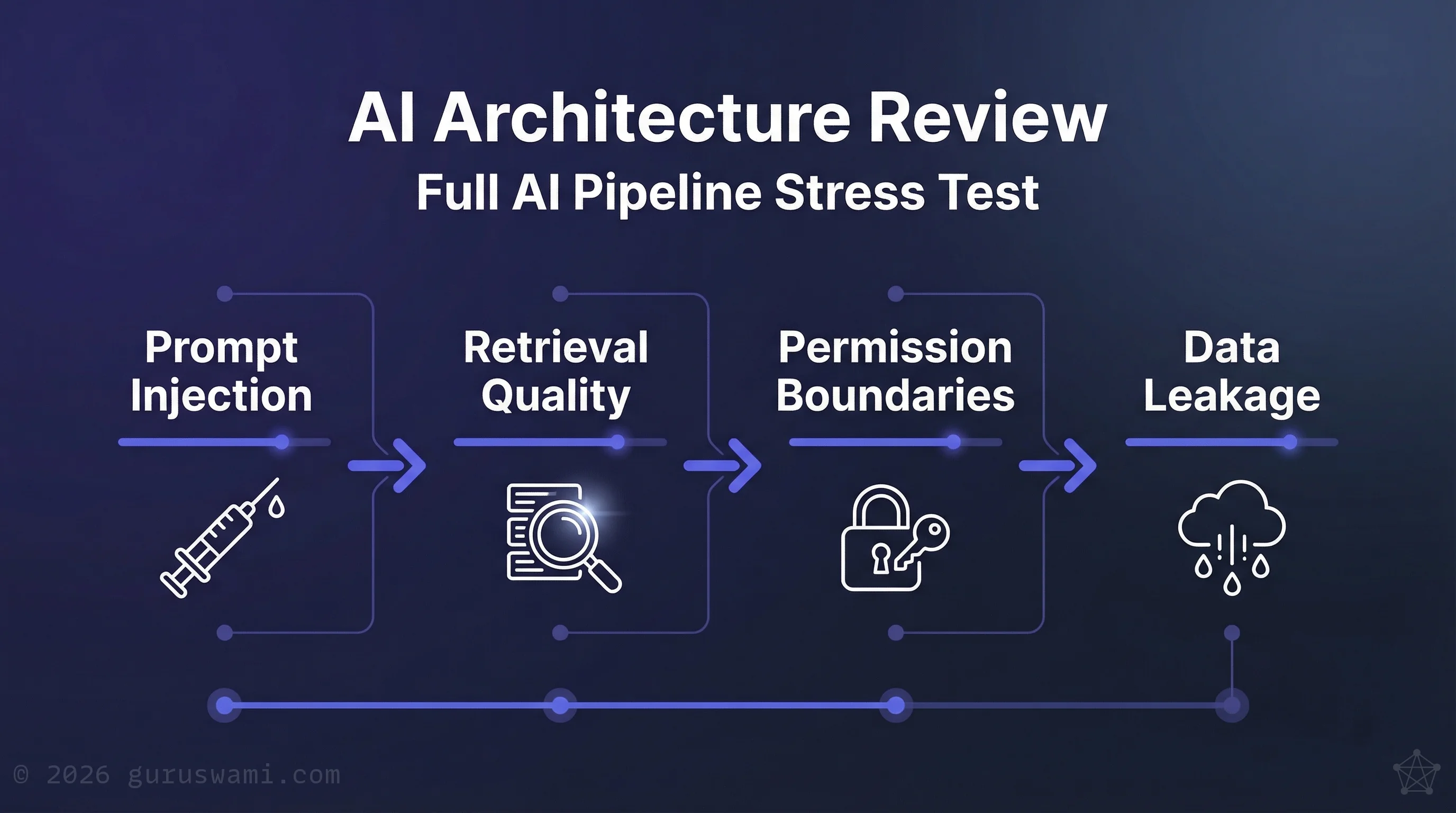

The Problem: Organisations are struggling with "excessive agency." An AI assistant with access to your CRM that can also send emails creates a data exfiltration path no firewall can see. Agents granted broad permissions bypass traditional Data Loss Prevention (DLP). Without independent review, these systems become covert data-leak channels.

Our Approach: We stress-test RAG (Retrieval-Augmented Generation) systems and agentic workflows against real attack classes. Prompt injection and jailbreaks. Data exfiltration through tool-use chains. Privilege escalation in agentic permissions. Retrieval poisoning.

We test for the failure modes vendors won't show you. Not with checklists, but with adversarial techniques developed in our own R&D lab. Where we find weaknesses, we recommend specific controls, architecture changes, or policy guardrails to address them. The deliverable is advisory. We tell you what's wrong and how to fix it. Your team implements the changes, and we're available to verify the remediation.

- Risk & Remediation Roadmap: Specific vulnerabilities, severity ratings, and actionable remediation steps your team can implement.

- Go/No-Go Recommendation: Clear recommendation for the steering committee on whether the system is production-ready.

- Compliance Mapping: How findings align with APRA CPS 230, ASIC expectations, and DTA requirements.

Sovereign / Air-Gapped Inference Design & Oversight

"Sovereign AI" is frequently a marketing term, not a technical reality. Sovereignty requires the entire inference pipeline to remain within Australian jurisdiction, not just data storage. No transit through international nodes during processing.

The Problem: Many "Australian-hosted" claims are misleading. Processing often transits international nodes. For Defence, Intelligence, and Critical Infrastructure, this is an unacceptable supply chain risk. Vendor claims need independent technical verification, not just contractual assurance.

Our Approach: Six years of R&D in distributed inference and air-gapped deployment, built on a physical lab running tensor-parallel inference across 2.5TB of unified memory. We design and verify architectures for on-premises inference. Data stays within your network across the full processing path. We work with your technical teams to validate architecture decisions, or assess their capability when needed.

We build ML-based triage and classification systems that operate without an internet connection. For Defence and Intelligence workloads where data cannot leave a secure facility, these systems process, classify, and route information entirely on local hardware.

- On-Premise Architecture Design: Custom blueprints for sovereign inference, from hardware selection through deployment and performance tuning.

- Independent Path Verification: Technical audits of vendor processing routes. We verify where data actually goes, not where the contract says it goes.

- Air-Gapped ML Systems: Design and oversight of classification, triage, and inference systems that operate with zero external connectivity.

How We Deliver

Every executive-level engagement is led personally by Paul Nevin. Strategic advisory, board briefings, and vendor challenge sessions are never delegated. For technical depth — architecture reviews, adversarial testing, infrastructure assessment — Paul works with a bench of vetted specialists, each selected for the engagement, briefed under NDA, and supervised throughout.

All engagements operate under strict NDA. We work in sensitive environments where data protection, discretion, and confidence are not optional.

The Typical Process

- Confidential discovery call. A short conversation under NDA to understand your situation, concerns, and objectives. No preparation needed from your side.

- Scope and fee. Within five business days, we come back with a written scope, timeline, and fixed fee. No open-ended billing.

- Assessment. Structured engagement tailored to your organisation. Duration depends on complexity — most briefings and reviews complete in two to four weeks.

- Written deliverable. A board-ready Briefing Note, risk assessment, or architecture review your leadership can act on. Designed for board minutes, not bookshelves.

- Follow-up. Optional retainer for ongoing advisory, or ad-hoc access when questions arise.

A note on scope: We provide technical advisory, security assessment, and governance guidance. We do not provide legal, financial, or insurance advice. Where our findings have legal or regulatory implications, we recommend engaging qualified legal counsel. We give you the technical reality. Your lawyers tell you what it means for your obligations.