- Data foundations. 80% of Australian data leaders say their data is not ready for AI. Everything downstream depends on this.

- Production RAG. Move beyond prototypes. Retrieval quality matters more than model selection.

- Security and observability. Prompt injection, data leakage, and missing audit trails are the primary threats. You cannot govern what you cannot see.

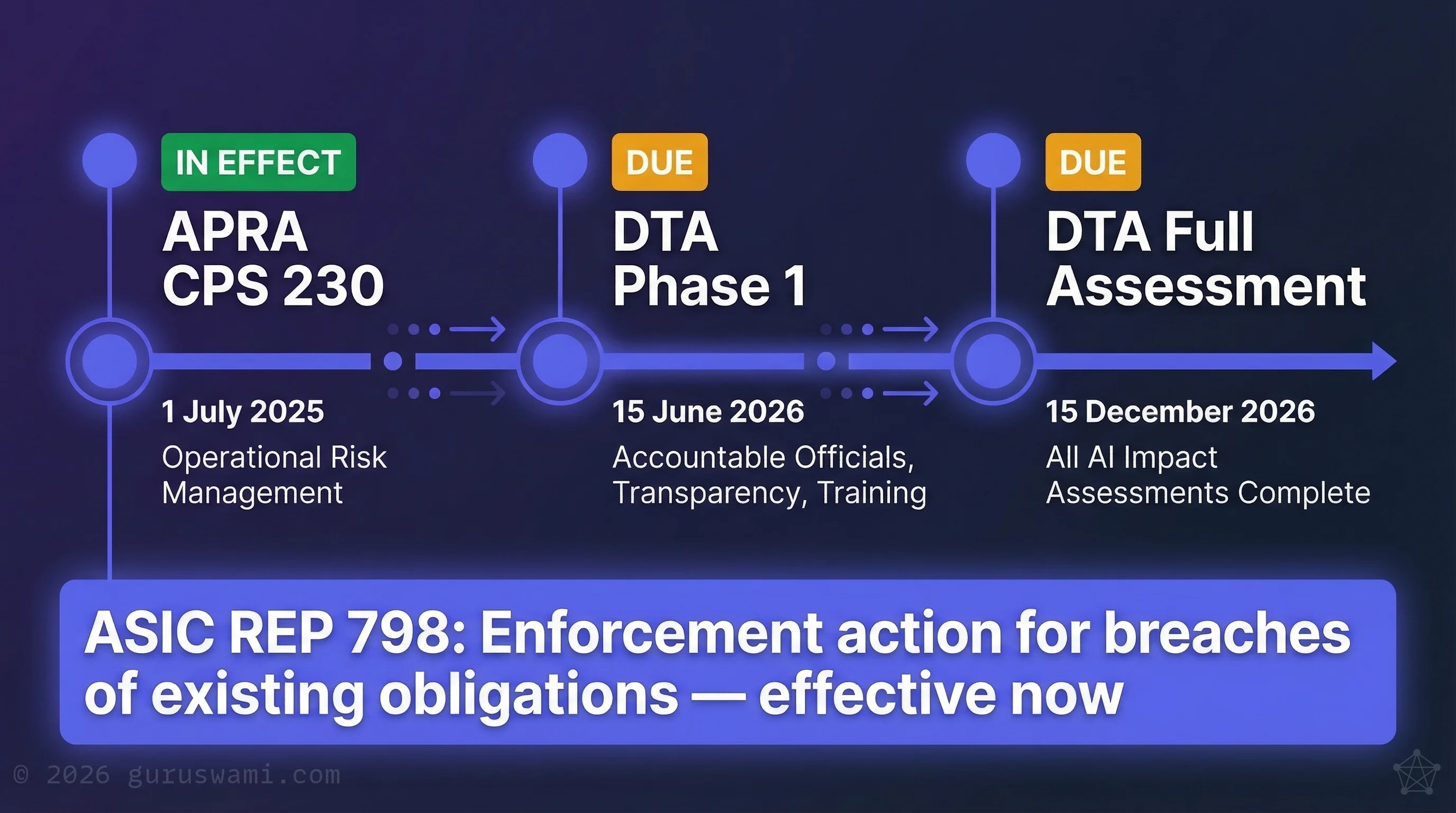

- The regulatory calendar. APRA CPS 230 is live. DTA mandatory policy lands June 2026. Impact assessments by December 2026. The deadlines are not negotiable.

- Workflow integration. AI use case registers, risk tiering, and value capture frameworks turn pilots into production.

The Execution Gap

Australian IT spending is projected at a record A$172.3 billion in 2026 (Gartner, 2025). Yet MIT-linked reporting on enterprise generative AI suggests up to 95% of pilots fail to deliver measurable ROI — a finding consistent with Gartner's 2024 prediction that at least 30% of GenAI projects would be abandoned after proof of concept.

Record investment. Record failure. The gap between spending and outcomes is where the problem lives.

What Different Budget Owners Are Facing

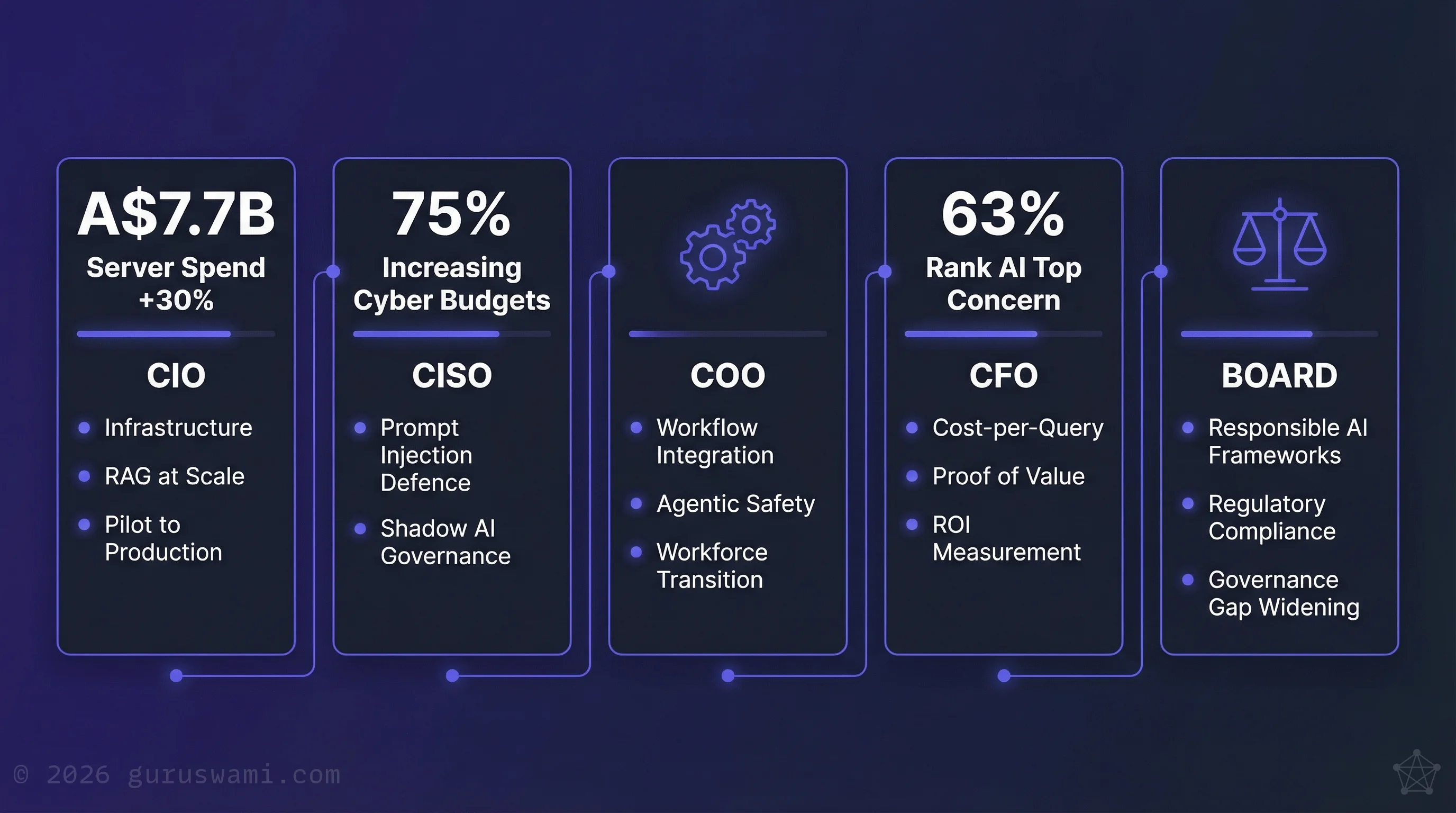

| Budget Owner | Priorities | Key Data Points |

|---|---|---|

| CIO | Infrastructure, RAG at scale, pilot-to-production | Server spending forecast up 30% to A$7.7B (Gartner, 2025); 52% of Government CIOs expect IT budgets to increase in 2026 (Gartner, 2025) |

| CISO | Prompt injection defence, shadow AI governance | 37% name AI as #1 cyber priority; 75% increasing cyber budgets (PwC, 2026) |

| COO | Workflow integration, agentic safety, workforce transition | Operational efficiency and autonomous agent deployment driving demand |

| CFO | Cost-per-query optimisation, proof of value | 63% of executives rank AI as top concern (KPMG, 2026); ROI measurement remains the primary barrier |

| Board / CEO | Responsible AI frameworks, regulatory compliance | ASIC REP 798 warns the governance gap is widening as adoption accelerates |

Why Pilots Stall

Three systemic problems keep blocking the transition to production:

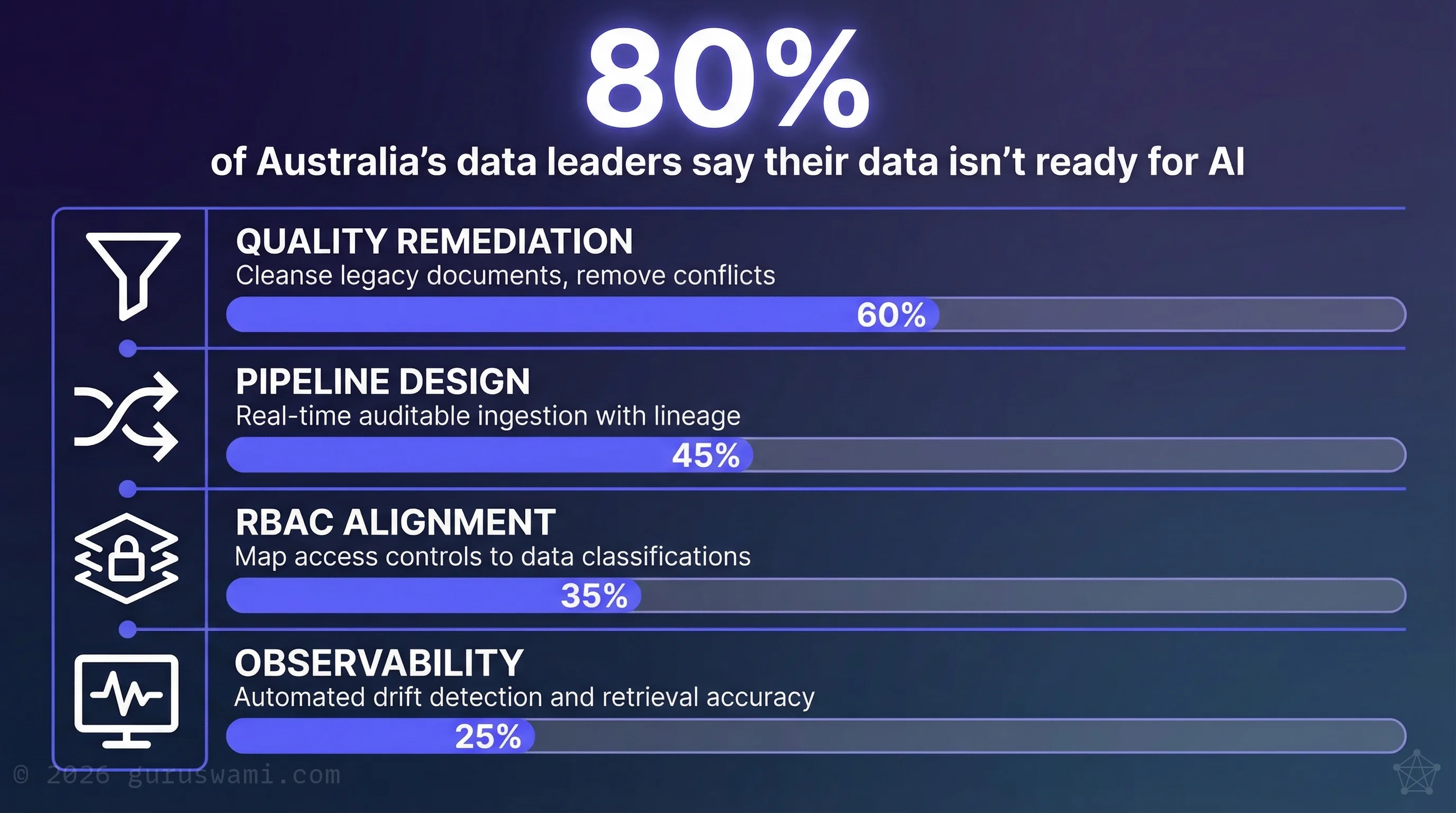

- Data isn't ready. 80% of Australia's top data and AI leaders say their data isn't ready for AI (ADAPT, 2025). Bad data in means confident nonsense out. AI presents incorrect information with high confidence, and decision-makers treat it as authoritative.

- ROI stays unclear. Gartner predicted at least 30% of generative AI projects would be abandoned after proof of concept by end of 2025. Mid-market firms are defunding pilots that can't demonstrate value, often because they never established how to measure it.

- Governance is missing. ASIC's REP 798 found that nearly half of financial services firms reviewed lack fairness or bias policies. In the 2026 enforcement environment, that invites penalties and board-level liability.

All three come back to the same root cause: data integrity. Our other briefs cover how to run pilots safely on local infrastructure and build the leadership capability to govern what follows. This one is the production playbook.

Pillar I: Data Foundations

Most 2025 pilots failed because they were built on unreliable data. The model performed exactly as designed. The data underneath it didn't. In 2026, the priority must shift to engineering reliable data pipelines before scaling anything.

Data Readiness Checklist

- Quality remediation. Systematic cleansing of legacy document sets to remove conflicting, outdated, or corrupted content.

- Pipeline design. Moving from static data dumps to real-time, auditable ingestion pipelines that maintain clear lineage from source to output.

- RBAC alignment. Mapping access controls to data classifications so AI agents don't inadvertently grant access to sensitive information.

- Observability. Automated logging of data drift and retrieval accuracy to catch degradation before it affects decisions.

Sovereign AI: What the Claims Actually Mean

In Australia, "Sovereign AI" claims vary significantly in what they actually deliver. Organisations must distinguish between where data is stored and where it is processed.

Many "Australian-hosted" offerings are misleading because the inference pipeline, including embeddings, fine-tuning, and logging, transits through international nodes. Organisations requiring true sovereignty must verify the entire processing path stays within Australian jurisdiction, not just the storage location.

Pillar II: Making RAG Work in Production

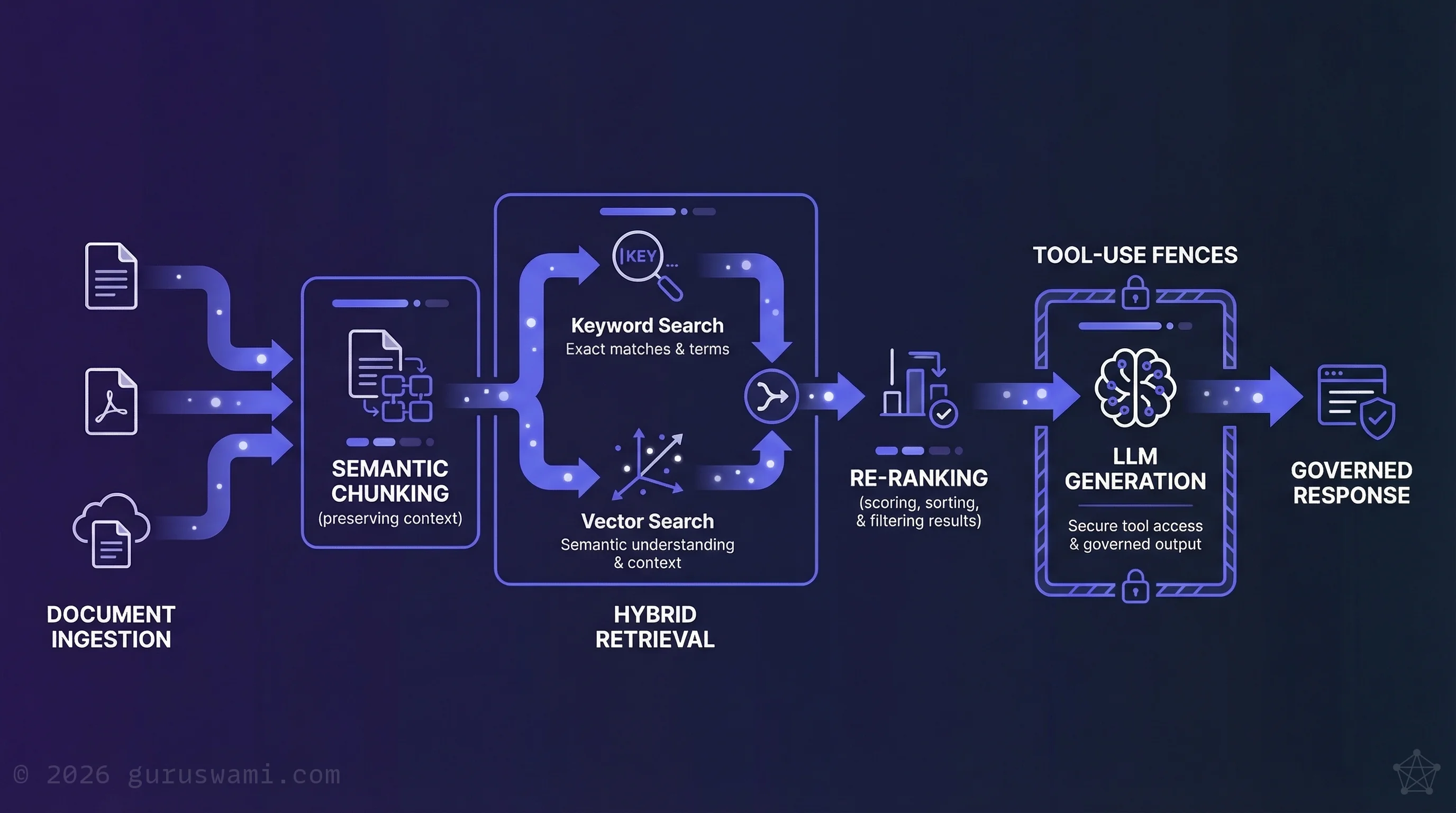

Retrieval-Augmented Generation (RAG) is the dominant enterprise AI pattern for 2026. It lets regulated organisations use large language models while keeping their data isolated. But most RAG implementations are still prototypes bolted onto the side of existing workflows, not integrated into them.

Technical Architecture for Production RAG

- Hybrid retrieval. Moving beyond basic semantic search to combine keyword and vector retrieval for better accuracy on complex queries.

- Semantic chunking. Replacing fixed-size document chunking with strategies that preserve the context of Australian regulatory and legal documents.

- Re-ranking. Adding scoring layers to address the well-documented problem where models ignore relevant context placed in the middle of long prompts.

- Tool-use fences. Hard limits on what an AI agent can execute. In regulated environments, non-deterministic behaviour needs containment, not just monitoring.

Model selection matters less than retrieval quality. If the retrieval pipeline returns the wrong documents, no model can fix that.

Pillar III: Security and Observability

Primary Threats

- Prompt injection. Attacks that hijack model behaviour through crafted inputs in documents, emails, or web pages. Mitigation: treat all user and retrieved inputs as untrusted. Implement least-privilege tool access.

- Data leakage. Sensitive information memorised by models or leaked through responses. Mitigation: DLP-integrated ingestion filters and automated scrubbing before embedding.

- Missing audit trails. Traditional endpoint detection doesn't capture AI decision paths. Mitigation: AI-specific decision logging that preserves auditability for ASIC and APRA.

Observability Requirements

- Groundedness metrics. Measuring whether outputs are actually supported by the retrieved source documents.

- PII monitoring. Automated auditing of outputs and logs for accidental disclosure.

- Token consumption tracking. Circuit breakers on agentic systems that can loop indefinitely and generate thousands of dollars in charges overnight.

Pillar IV: The Regulatory Calendar

The Australian regulatory landscape has shifted from voluntary guidance to active enforcement.

Key Milestones

- 1 July 2025 (in effect): APRA CPS 230. Mandatory operational risk management and stronger material service-provider oversight for all APRA-regulated entities, including AI vendors where relevant.

- 15 June 2026: DTA Mandatory AI Policy Phase 1. Federal agencies must appoint accountable officials, publish transparency statements, and complete mandatory AI training for all APS staff.

- 15 December 2026: DTA mandatory AI requirements — final milestone. All in-scope APS AI use cases must have completed impact assessments and related controls. All remaining mandatory requirements take effect.

Independent Challenge

Boards are increasingly requiring third-party verification of AI vendor claims. ASIC's REP 798 findings make it clear that organisations need transparency on fairness, bias, and human oversight. What boards need is not another 200-page policy document. It is a clear, concise assessment they can act on and defend in minutes.

Pillar V: Workflow Integration

AI needs to move from a separate tool into the operating model. The COO's role is to prevent shadow AI (staff using unapproved tools on corporate data) while capturing the efficiency gains available to organisations that move beyond experimentation.

Key Deliverables

- AI Use Case Register. A centralised inventory of every AI deployment, categorised by risk and owner.

- Risk Tiering Matrix. Standards for determining which uses require manual intervention and which can operate with monitoring only.

- Value Capture Framework. Mapping AI to specific tasks (triage, first-drafting, classification) and tracking the time and cost saved per task.

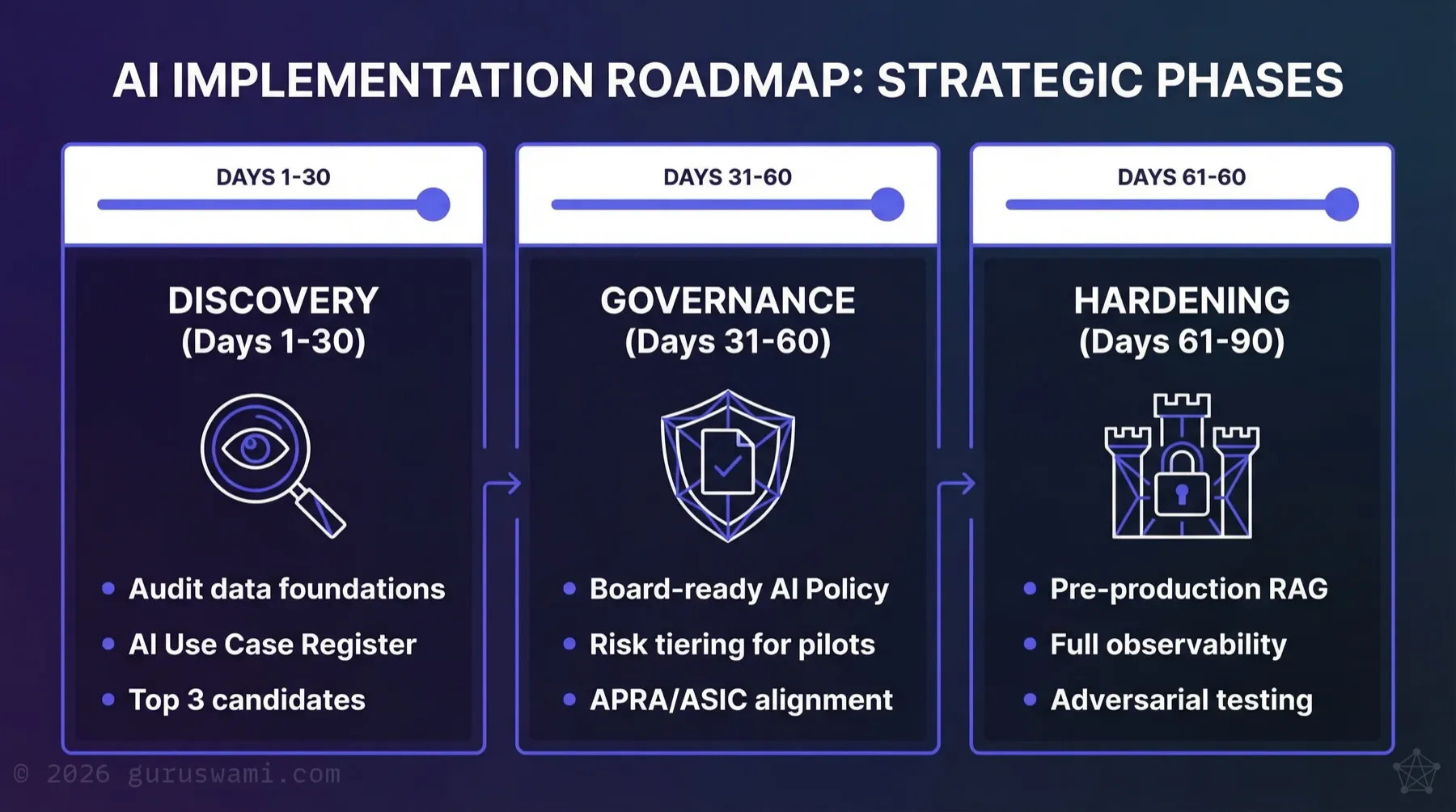

The 90-Day Production Readiness Plan

- Days 1–30: Discovery. Audit data foundations. Create the initial AI Use Case Register. Identify the top three highest-value, lowest-risk candidates.

- Days 31–60: Governance. Draft a board-ready AI Policy. Establish risk tiering for all active pilots to satisfy APRA and ASIC requirements.

- Days 61–90: Hardening. Move the top-tier RAG pilot into a pre-production environment with full observability, semantic chunking, and adversarial testing.

The path from pilot to production runs through data, retrieval, security, and governance. Get those right, and the model choice becomes straightforward.

- A$172 billion in projected IT spending. MIT research finds up to 95% of enterprise generative AI pilots fail to deliver measurable ROI. The gap between investment and production outcomes is where Australian organisations are losing money.

- 80% of Australia's top data leaders say their data is not ready for AI. Bad data in means confident nonsense out. Fix data foundations before scaling anything.

- Model selection matters less than retrieval quality. A weak retrieval pipeline paired with the best model available will generate a confident wrong answer.

- The regulatory calendar is concrete: APRA CPS 230 is already in effect, DTA Phase 1 lands June 2026, full assessments by December 2026. Governance is no longer optional.

- The 90-day path: audit data foundations, draft board-ready governance, harden your top RAG pilot into pre-production with full observability.

Guruswami Advisory helps Boards, CISOs, and CIOs move AI from fragile pilots to secure, compliant production under APRA, ASIC, and DTA requirements.

Talk to Us Explore Our Services