- Decision discipline. AI will confirm your strategy. It will not tell you your strategy is wrong. The leaders who know this make better decisions than those who treat AI output as objective truth.

- Creative prototyping. AI collapses the cost of testing ideas to near zero. But it also collapses the time to sounding like an expert, which is not the same as being one.

- Critical evaluation. AI sounds authoritative regardless of whether it is correct. The gap between fluency and depth is where expensive mistakes live.

- Communication across levels. Translating board vision to technical reality and back is where most AI initiatives break down. Literacy closes that gap.

AI Is Not a Productivity Tool

The standard pitch for AI goes like this: automate the boring stuff, free your people for higher-order work. Creativity, connection, strategic thinking. Every vendor slide deck tells this story.

It is not wrong. But it misses the real opportunity.

AI's deeper value is not in clearing your schedule. It is in expanding what you are capable of understanding. Mapping your existing knowledge against any domain you need to explore. Presenting information that conflicts with your assumptions. Scaffolding concepts you have never encountered, tailored to how you actually think. Showing you what you do not know, precisely and without judgement, and then helping you build the understanding to close that gap.

This is not hypothetical. In our own research lab, we used AI to explore a scientific domain entirely outside our core expertise. Within days, we had formed novel hypotheses, designed experiments, and engaged in technical discussions that a qualified specialist later assumed reflected years of formal training. It did not. It reflected what happens when AI is used as a learning instrument rather than a search engine.

That is simultaneously the promise and the danger.

The Fluency Trap

AI collapses time-to-fluency. You can reach "I speak the right vocabulary and reason in the right abstractions" far faster than you can build the slow, embodied intuitions that real experts carry from years of error, edge cases, and tacit knowledge.

Most audiences cannot tell the difference, and that is the problem. When someone speaks fluently about a domain, we assume depth. It is one of our oldest social heuristics and AI has broken it. A person with ten hours of AI-assisted reading can hold a conversation that sounds like ten years of experience, especially when the discussion stays at the level of strategy and narrative rather than detailed practice.

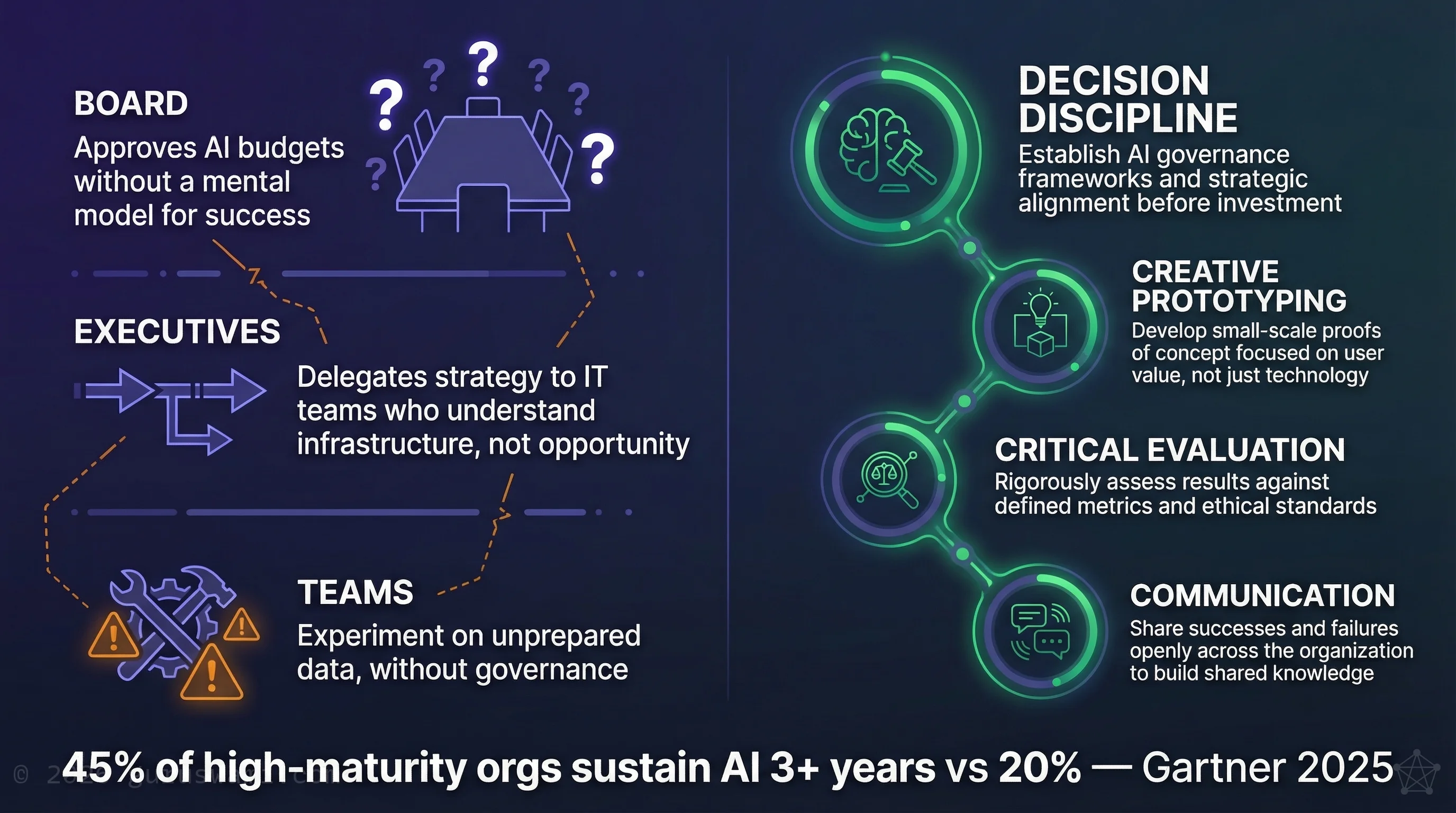

This is not a future risk. It is happening now. And it affects every level of the organisation:

The executive who uses AI to rapidly explore a market, build a strategy, and present it with confidence may genuinely have found something valuable. Or they may have produced a fluent, well-structured argument built on foundations they never tested. AI will not tell them which one it is. It will present both with equal conviction.

Organisations with high AI maturity keep AI projects in production at more than double the rate of low-maturity organisations (Gartner, 2025). The difference is not budget. It is the organisational capacity to distinguish between sounding right and being right.

The Mirror Principle

AI models are trained on the sum of human knowledge, including every bias, assumption, and blind spot. When you ask AI a question, it does not reason from first principles. It reflects patterns from its training data back at you, shaped by your framing.

This is the Mirror Principle: AI reflects human knowledge and bias, not objective truth.

Leaders who understand this make better decisions because they know what they are looking at. Those who treat AI output as objective truth are outsourcing their judgement to a system that has none.

Most people use AI the way it wants to be used: ask a question, receive a confident answer, feel validated, move on. The AI agrees with you because agreement is what it was optimised to produce. The result is confirmation bias at machine speed. You ask it to evaluate your strategy. It tells you the strategy is sound. You ask it to identify risks. It identifies the risks you already suspected and frames them as manageable. You feel thorough. The strategy ships. Nobody in the room could distinguish rigour from flattery.

We tested this in our own research. We used AI extensively to develop a scientific thesis across a domain we had no background in. At every stage, AI confirmed the hypothesis. It agreed with our reasoning. It helped us build increasingly sophisticated arguments. The thesis was wrong. We only discovered this by deliberately building adversarial testing into the process: using AI to attack the hypothesis rather than support it, seeking disconfirming evidence rather than validation, and ultimately publishing the negative result.

The AI never volunteered that the theory was flawed. It had to be asked to look.

The gap between sounding right and being right is where expensive mistakes live. AI will not tell you which side you are on.

What AI-Literate Leadership Looks Like

The most effective leaders in the AI era will combine two capabilities that are rarely found together: the discipline to use AI as a counterweight to their own thinking, and the communication skills to translate what they learn into organisational action.

Decision Discipline

This is the capability most executives have not yet encountered, and the one with the highest return.

It means keeping a mental ledger of what is yours (things you can derive or explain from first principles) versus what is scaffolded (things you can only navigate with the model open in front of you). It means deliberately seeking refutation: prompting AI to find the weaknesses in your strategy, argue the opposite position, and surface the evidence you would rather not see. It means treating the moment of frustration, when the model tells you something you did not want to hear, as the moment of highest value.

Intelligence analysts train for years to develop this reflex: challenge assumptions, seek disconfirming evidence, distinguish between what you know and what you want to be true. Most executives have never been taught to think this way. AI makes the discipline accessible, but only if you know to ask for it.

Without that discipline, the default is flattery. Flattery at scale produces confident, well-articulated, wrong decisions.

AI will confirm your strategy. It will not tell you your strategy is wrong. The discipline is learning to ask it to look.

Creative Prototyping

Consider what it takes to test a new idea in most organisations. A budget proposal. A steering committee. Developers to prototype, analysts to model, legal to review. Weeks of calendar time. Most ideas die in that queue. Not because they were bad, but because the cost of testing them was too high.

AI removes that barrier. An executive who can articulate their vision clearly can iterate on a concept, produce a working prototype, stress-test the economics, and discard or refine the idea in hours. As Jeff Bezos wrote in his 2018 shareholder letter: "If the size of your failures isn't growing, you're not going to be inventing at a size that can actually move the needle." AI collapses the cost of those failures to near zero.

A concrete example from our own lab. We recently used Claude Code to investigate a scientific hypothesis in a domain well outside our expertise, bridging genomics, neuroscience, and AI. The tools built the code, tested the hypothesis, conducted adversarial review, and educated us enough to make informed decisions at each stage. Ten days of accelerated exploration, start to refuted result. A traditional research programme would have taken six to twelve months to reach the same conclusion. The hypothesis was wrong. That is the point. The speed to refutation is the value.

But the same speed that lets you test ideas faster also lets you build confidence faster than warranted. The executive who prototypes a strategy in a day has not done the due diligence of the team that would have spent six weeks on it. They have done something different: a rapid exploration that needs to be tested against reality before it carries weight. The discipline is knowing the difference.

Critical Evaluation

AI produces output that sounds authoritative regardless of whether it is correct. The literate executive knows this. They treat AI output as a first draft, not a finding. They ask "what is the source?" and "what would change if this is wrong?"

The specific skill: distinguishing between what AI is good at (synthesis, pattern recognition, rapid exploration of possibility spaces) and what it is not (verification, original reasoning, understanding context it has never seen). Leaders who understand these boundaries use AI far more effectively than those who either trust it completely or dismiss it entirely.

Communication Across Levels

The leaders who can translate between the board's strategic vision and the development team's technical reality will be in high demand. Turning a board's "AI for customer service" ambition into a scoped, testable pilot brief is a concrete example of this skill. This translation layer is where most AI initiatives break down. It only improves through hands-on experience.

The Learning Curve Is the Point

None of this works out of the box. The leaders producing results with AI have invested months developing the discipline to direct it effectively. Not prompting techniques. Thinking techniques. Learning which questions produce reliable insight. Understanding why a model fails on certain tasks. Developing the instinct to question confident-sounding nonsense. Building the habit of asking "what am I missing?" instead of "does this confirm what I think?"

This is why starting matters. Every month of hands-on experience makes the next AI decision better informed. The organisations investing in AI literacy now, across leadership and not just technical teams, are giving their people time to develop this discipline before they need it under pressure.

You cannot acquire this in a crisis. And you cannot acquire it by reading about it. The understanding comes from doing the work, making the mistakes, and building the judgement that only comes from direct experience.

Where to Start

AI literacy does not start with a tool or a platform. It starts with understanding what AI actually is, what it reflects, and what it cannot do for you.

For boards: Demand evidence, not demonstrations. When your team presents an AI-generated strategy, ask what was tested against reality and what was only tested against the model. Ask who tried to break the recommendation before it reached you.

For executives: Get hands-on. Use AI tools yourself instead of relying on your team's filtered summaries. The instinct for when output is trustworthy and when it is not can only be built through direct experience. Manage AI from a distance and you will manage it badly.

For organisations: Run internal pilots on local infrastructure where your teams can experiment freely without metered costs or data sovereignty concerns. Design those pilots so that failure is cheap and visible. The institutional knowledge built during this phase, including the knowledge of what goes wrong, is more valuable than the pilot output.

- AI's real value for leaders is not automation. It is the ability to explore domains, test ideas, and expand understanding at a speed that was not previously possible.

- AI collapses time-to-fluency, not time-to-depth. The gap between sounding right and being right is where expensive mistakes live. Your governance framework needs to account for it.

- AI reflects human knowledge and bias, not objective truth (the Mirror Principle). Leaders who understand this make better decisions. Those who treat AI output as findings are outsourcing judgement to a system that has none.

- The most valuable AI skill for executives is not prompting. It is the discipline to use AI as a rigorous counterweight to your own thinking, and the honesty to distinguish between what you know and what the model knows.

- Get hands-on. Direct experience with AI builds judgement that cannot be acquired from briefings, vendor demos, or delegated summaries.

Guruswami Advisory helps leaders develop AI literacy through hands-on experience, not slide decks. Our approach is grounded in six years of applied research, including publishing our own negative results.

Talk to Us Explore Our Services