We are building a small, specialist team to support AI security and governance engagements across Australian Government, financial services, critical infrastructure, and regulated enterprise.

Controlled innovation, not experimentation without guardrails.

The Quality of the Team Is the Quality of the Advice. We Hire Accordingly.

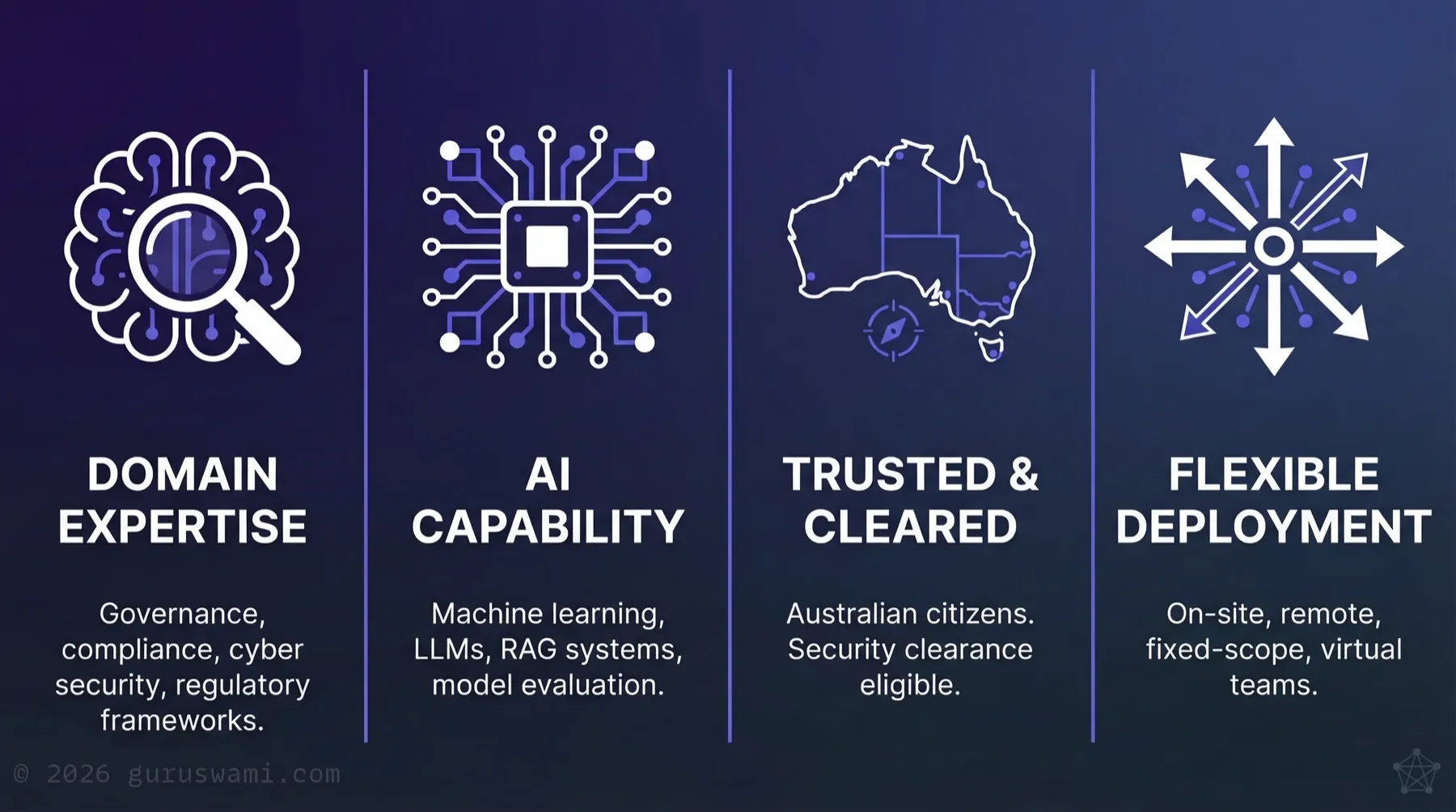

We hire for curiosity, passion, and domain specialisation first. AI is the tool. You are the expert. AI engineering experience is valuable, but this field is too new for anyone to claim a decade of it. We would rather hire a sharp compliance analyst, auditor, or security professional who is genuinely excited about AI than someone with an inflated CV full of buzzwords.

Our AI and machine learning experience goes deep. You bring the domain knowledge and the discipline. We combine both.

Have you played with prompt engineering? Discovered AI music or art generation? Installed a local LLM for private inference?

Have you pondered where your question actually goes when you press send? Whether that data is accumulated or temporary? What it could reveal about you if you use it long enough?

That curiosity is exactly what we want to see. Self-directed drive to experiment and question is something we cannot train.

Domains of interest:

- AI governance, risk, and compliance

- Cybersecurity and information assurance

- Data engineering and pipeline architecture

- RAG systems, model evaluation, and deployment

- Regulatory frameworks: APRA CPS 230, DTA, ASIC, PSPF

Requirements:

- Australian citizen, eligible to hold or obtain Australian Government security clearance

- Based in Australia. Our clients are nationwide, with concentrations in Canberra, Sydney, and Melbourne.

What Our Engagements Look Like

- Assessing AI readiness, maturity, and capability gaps across business units

- Evaluating vendor proposals and sovereignty claims against technical reality

- Auditing and building AI governance aligned with APRA CPS 230, DTA mandatory policy, and ASIC expectations

- Reviewing architecture choices for AI security controls and access boundaries

- Detecting covert data leakage through AI inference streams

- Using AI tools and emerging techniques to hypothesise and experiment in controlled lab environments

All engagements are led by the Principal Advisor. You will work under direct oversight, to defined procedures and rules of engagement, in sensitive environments with access to our distributed inference lab and R&D capability.

Engagements vary: on-site placements, remote deployments, fixed-scope projects, and virtual team arrangements. We match the model to the client's needs.

Why Guruswami?

This is not a traditional consulting firm. We are small by design. Every person we bring on directly shapes the quality of what we deliver.

You will work on problems alongside domain practitioners with remarkable depth of expertise and hard-won lessons to share. The challenges are not just national. Some are world-first. You will be at the forefront of AI innovation and adoption in Australia, working with organisations smart enough to see what is coming and determined to capitalise on it.

Direct applications only. Tell us what you have built, broken, or discovered with AI, and what it taught you. Send that and your CV to [email protected].